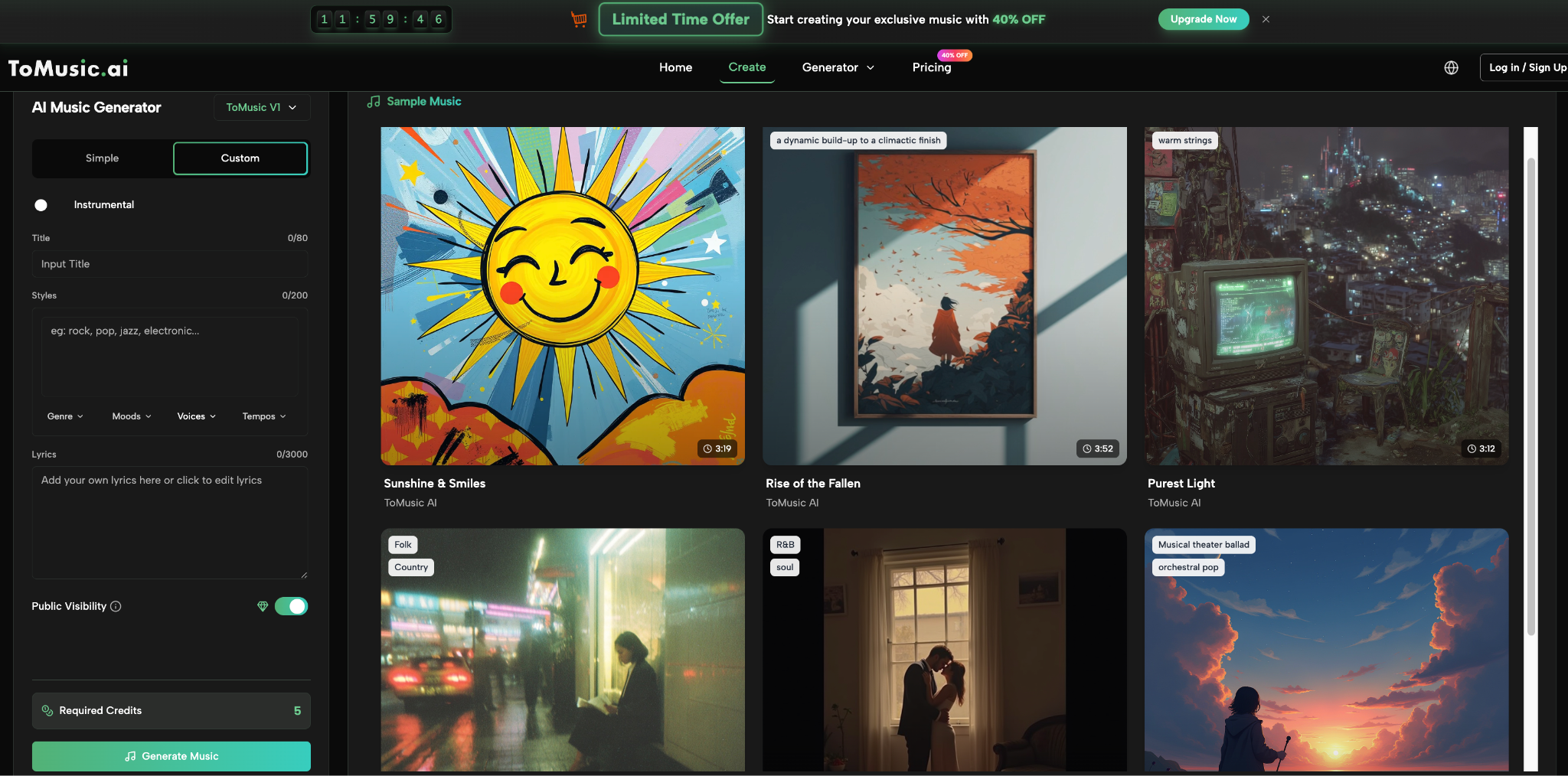

I tested ToMusic with a question that feels simple but is actually difficult: can it help a song match an emotion more accurately after revision? Many tools can create a track from a prompt, but an AI Music Generator becomes more valuable when it helps the user move from a rough emotional guess toward a more precise musical feeling.

This matters because emotion is often the reason people want music in the first place. A creator wants a video to feel warm. A lyric writer wants a chorus to feel honest. A brand wants a track to feel calm but confident. A storyteller wants a scene to feel lonely but not hopeless. These differences are subtle, and subtle emotion is hard to prompt. If a platform only understands broad labels like happy or sad, the output may sound acceptable but emotionally inaccurate.

That is why I focused this test on revision. I did not expect ToMusic to understand the exact feeling on the first try. Instead, I wanted to see whether I could guide the result closer through clearer prompts, lyric changes, and listening feedback. Under that standard, the platform felt useful because each version helped me name the emotion more precisely.

Why Emotional Accuracy Is Hard To Generate

Music is not just a collection of sounds. It carries emotional weight through tempo, harmony, vocal tone, instrument choice, rhythm, and structure. A single word like hopeful can lead to many different musical outcomes.

Broad Emotion Words Are Not Enough

During testing, prompts based only on broad mood words produced results that were listenable but not always specific. “Sad” could become dramatic. “Hopeful” could become too bright. “Calm” could become flat.

The more useful prompts included emotional boundaries. Instead of asking for sadness, I asked for quiet sadness with a small sense of acceptance. Instead of asking for hope, I asked for restrained hope after a difficult moment.

The First Output Became An Emotional Draft

The first generation was rarely the final emotional answer. It was more like an emotional sketch. It showed what the platform understood and what I needed to clarify.

Listening Helped Me Refine The Feeling

After hearing each version, I could name what was wrong. Too sentimental. Too fast. Too polished. Too dramatic. Too distant. These reactions became instructions for the next prompt.

Testing One Emotion Across Several Versions

For this review, I tested a single emotional idea across multiple revisions: a song about leaving something behind without bitterness. I wanted it to feel reflective, warm, and quietly hopeful.

The First Version Felt Too Melancholic

My first prompt used words like reflective, emotional, and soft. The generated result leaned more melancholy than I wanted. It sounded beautiful in parts, but it felt heavier than the emotional target.

I realized that I had described the sadness but not the acceptance. The next prompt added words like gentle closure, calm optimism, and warm chorus. That shifted the direction closer.

The Second Version Felt Too Uplifted

The second result corrected the heaviness, but it became slightly too bright. It lost some of the quiet emotional tension that made the idea interesting.

This was a useful lesson. Emotional accuracy is not about pushing one feeling harder. It is about balancing contrast. I needed the track to hold both loss and peace.

The Third Version Came Closest

The third prompt described the song as soft acoustic pop with restrained vocals, slow drums, warm piano, and a chorus that feels hopeful without becoming celebratory. That version felt closest to the target.

The improvement did not come from complicated music theory. It came from more careful emotional wording. That made the test feel accessible and practical.

The Four-Step Revision Process I Used

The public ToMusic workflow supported this emotional revision process without requiring complicated setup.

The Review Stage Became The Real Creative Stage

The most important step was not pressing generate. It was listening honestly. Each result asked a question: is this the feeling I meant?

×

When I could identify the mismatch clearly, my next prompt improved. “Less dramatic” was better than “make it better.” “Warmer vocal, slower tempo, less cinematic” was more useful than “more emotional.”

How Lyrics Changed Emotional Accuracy

After testing prompts, I added a short lyric. This changed the emotional test because the words carried their own meaning.

The Lyric Needed Simpler Emotional Movement

My first lyric draft had too many images. It tried to say everything at once. When ToMusic generated the song, the emotional focus felt blurred.

After reducing the lyric to clearer phrases, the generated result felt more direct. The emotion became easier to hear because the words were not fighting for attention.

The Chorus Needed A Stronger Emotional Turn

The original chorus repeated the same feeling as the verse. That made the song feel emotionally flat. I revised the chorus to introduce acceptance rather than more regret.

This improved the result. The verse could carry memory, while the chorus could carry release. ToMusic made that structural need easier to hear.

Text-Based Creation Makes Emotional Testing Easier

The strongest part of the experience was that emotional revision could happen through language. I did not need to adjust instruments manually or rewrite music notation. I could refine the emotional brief.

Words Became Emotional Steering Tools

The value of Text to Music showed up clearly here. Changing words like dramatic, restrained, intimate, bright, lonely, warm, or hopeful changed the direction of the generated music.

This made me more aware of my own language. If I could not describe the feeling clearly, the output became less focused. If I described it with care, the result moved closer.

The Process Helped Me Understand The Song Better

The test was not only about whether ToMusic understood me. It was also about whether I understood my own target emotion. The platform forced me to be more specific.

By the third version, I had a better description of the song than I had at the beginning. That alone made the tool useful.

A Table Of Emotional Testing Results

The table below summarizes how different prompt changes affected the testing experience.

|

Emotional Target |

First Issue |

Prompt Adjustment |

Resulting Improvement |

|

Quiet hope |

Too sad |

Added gentle closure |

More balanced mood |

|

Warm memory |

Too dramatic |

Asked for restraint |

More intimate feeling |

|

Soft sadness |

Too flat |

Added slow build |

Better emotional movement |

|

Personal lyric |

Too crowded |

Simplified lines |

Clearer vocal delivery |

|

Reflective chorus |

Too repetitive |

Added emotional turn |

Stronger song structure |

|

Background emotion |

Too noticeable |

Asked for subtle support |

Better content fit |

The Pattern Was Consistent

The platform became more useful when the emotional target became more specific. Broad adjectives created broad results. Contextual emotion created more focused results.

The best prompts were not long for the sake of being long. They were clear. They described the exact emotional balance I wanted.

Where ToMusic Felt Strongest Emotionally

ToMusic felt strongest when I used it to explore emotional variations. It helped me hear how small changes in wording could shift the entire feeling of a song.

It Helped Separate Similar Emotions

Sadness, nostalgia, loneliness, peace, and acceptance can overlap. The platform helped me test those differences by generating versions that leaned in different directions.

Instead of saying “this is emotional,” I could decide what kind of emotional. That made the creative process more mature.

It Turned Emotional Taste Into Action

Before testing, I only had a vague feeling. After testing, I had actionable notes: slower tempo, less drama, warmer vocal, simpler chorus, more hopeful ending.

This is valuable because many creators know when something feels wrong but cannot explain why. ToMusic helped turn that feeling into clearer revision language.

Where Emotional Testing Still Has Limits

Even with careful prompting, ToMusic did not always hit the exact target. Emotional nuance is difficult, and generative music can interpret words differently than expected.

The Platform Cannot Know Private Memories

If a song is based on a personal experience, the platform only knows what the user writes. It cannot understand private context unless the user translates it into musical direction.

The user must describe not only the story, but the desired emotional performance. Should it feel raw, calm, distant, forgiving, intimate, or unresolved?

Some Results Felt Too Polished

A few versions sounded clean but not emotionally vulnerable enough. They were musically pleasant, yet they did not match the fragile feeling I wanted.

This is not only a technical issue. Sometimes a song feels more real when it is restrained. Prompting for softness, simplicity, or intimacy helped address that.

How I Would Improve Future Tests

After this emotional accuracy test, I would approach ToMusic with a more structured revision method.

Start By Naming The Emotional Contrast

Instead of asking for one mood, I would define a contrast. For example: sad but accepting, hopeful but restrained, romantic but mature, calm but not empty.

Most real emotions are mixed. Defining the mixture helps avoid generic output.

Revise One Variable At A Time

I would avoid changing every part of the prompt at once. If the vocal feels wrong, change the vocal direction first. If the tempo feels wrong, change the tempo next.

This makes it clearer which adjustment improved the result. It turns testing into a learning process.

My Honest Verdict On Emotional Accuracy

ToMusic was not perfect at emotional accuracy on the first try, but it became more useful through revision. That is an important distinction. A tool does not need to guess the full emotional target instantly to be valuable. It needs to help the user move closer.

The Platform Rewards Thoughtful Revision

When I listened carefully and revised clearly, the outputs improved. When I used vague emotional words, the results felt broader and less personal.

ToMusic generates the music, but the user directs the feeling. That role is important. The best results came when I treated myself not as a passive requester, but as an emotional editor.

Why This Test Changed My View Of ToMusic

This test made me see ToMusic less as a machine for instant songs and more as a space for emotional exploration. It helped me define what I meant by hope, sadness, warmth, and closure. It gave me versions to compare and language to improve.

For creators, that is a meaningful strength. Many people do not need a perfect track immediately. They need to understand what their idea is trying to feel like. ToMusic helped with that.

The Real Value Is Emotional Discovery

The most useful result was not only a generated song. It was a clearer emotional direction.

I would use ToMusic again when I need to explore subtle moods, revise lyrics emotionally, or test whether a song idea feels honest. Its strength is not just generation. Its strength is helping users listen their way toward clarity.